CLIENT:

A multinational healthcare organization with the main goal of helping people live healthy lives. The company has more than 115,000 workers and more than 4,000 FLMs in more than 160 countries, finding innovative ways to improve people’s lives. It provides a wide range of industry-leading solutions that complement positive long-term healthcare trends in both developed and emerging economies.

CHALLENGE:

The organization’s literature review process was fragmented, manual, and reliant on external vendors, resulting in high costs, inefficiencies, and poor scalability.

Lack of an internal solution limited flexibility in search strategy design and reduced operational agility. Repetitive manual work delayed research and decision-making, while experts spent significant time on low-value tasks instead of analysis.

SOLUTION:

To address these challenges, the Techelix team developed an LLM-powered agentic workflow to automate and streamline systematic literature reviews. The solution reduces costs, improves efficiency, and enables experts to focus on high-value analysis. It is inspired by the methodology from “A 24-step guide to designing and conducting systematic reviews and meta-analyses in medical research.”

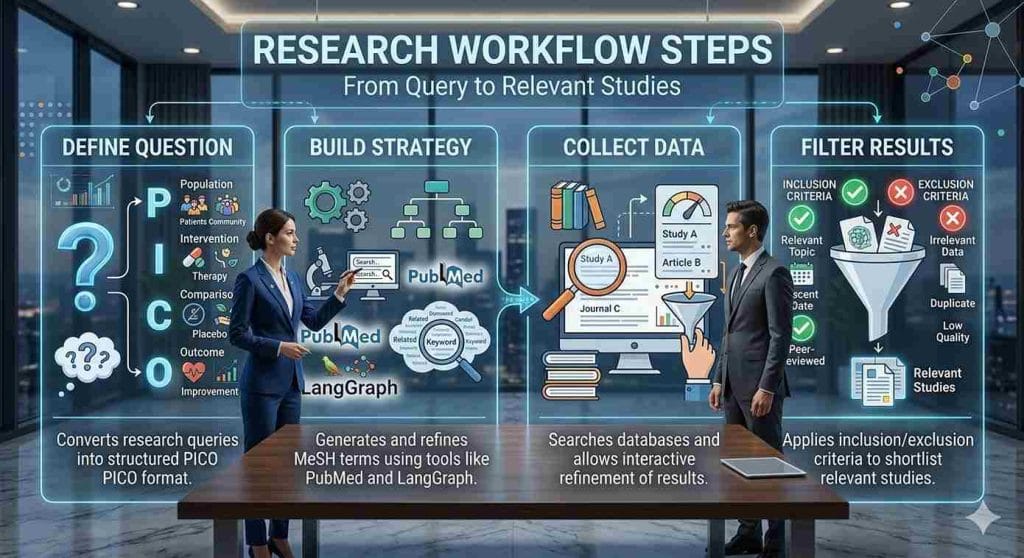

Workflow overview:

- Define question: Converts research queries into structured PICO format.

- Build strategy: Generates and refines MeSH terms using tools like PubMed and LangGraph.

- Collect data: Searches databases and allows interactive refinement of results.

- Filter results: Applies inclusion/exclusion criteria to shortlist relevant studies

AI Solution with Human Oversight:

The workflow was built in Python with an interactive interface using Gradio, allowing experts to review, validate, and refine results at every stage. A human-in-the-loop approach, powered by LangGraph, ensures accuracy and continuous improvement.

The system generates a clear Excel report summarizing included and excluded references, while also enabling users to revisit and refine research queries over time.

Technology Stack

- LangChain – Structures workflows and integrates LLMs with Python

- LangGraph – Enables agent-based workflow orchestration.

- Gradio – Provides an interactive user interface.

- FastAPI – Builds scalable backend APIs.

- Azure OpenAI – Delivers secure LLM capabilities.

- MySQL – Manages data and references.

- Docker – Ensures consistent environments.

- AWS Elastic Container Service – Supports scalable deployment.

- AWS CodeBuild – Automates build and integration processes.

RESULTS:

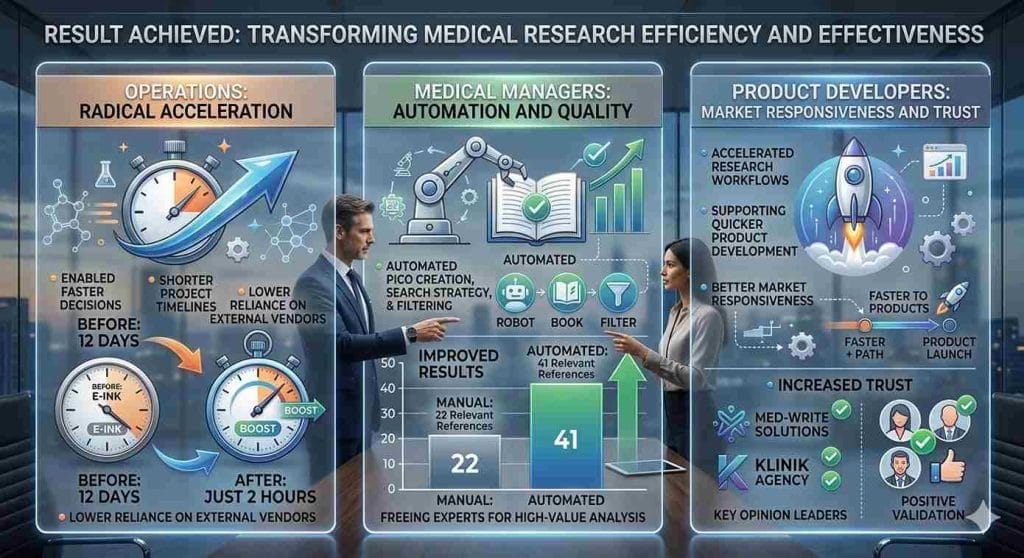

Operations

Reduced turnaround time from 12 days to just 2 hours, enabling faster decisions, shorter timelines, and lower reliance on external vendors.

Medical Managers

Automated tasks like PICO creation, search strategy, and filtering—freeing experts to focus on high-value analysis. Also improved results (41 relevant references vs. 22 manually).

Product Developers

Accelerated research workflows, supporting quicker product development and better market responsiveness. Positive validation from medical writing agencies and key opinion leaders increased trust in the solution.

CLIENT REVIEW:

In healthcare, speed and accuracy are essential. By leveraging LLM-powered automation, we significantly accelerated literature reviews and reduced the manual burden on experts. This not only improved efficiency but also enabled faster, more informed decision-making across research and clinical teams.

— Dr. Emily Carter, Chief Medical Officer