The Identity Crisis: Why 2026 is the Year of the Deepfake

In 2026, you can no longer trust your eyes or ears when you see a video online. Deepfakes have moved past being funny memes; they are now high-level security threats. Attackers are using these tools for “Brand-Jacking,” where they create a fake video of a leader to crash a stock price or trick employees into giving away secrets.

Here’s the thing: a deepfake isn’t just a trick. It is a digital breach of your identity. If someone can fake your voice, they can bypass voice-activated security or convince your team to move millions of dollars to a fraudulent account. It’s a fast-moving problem that traditional antivirus software just can’t catch.

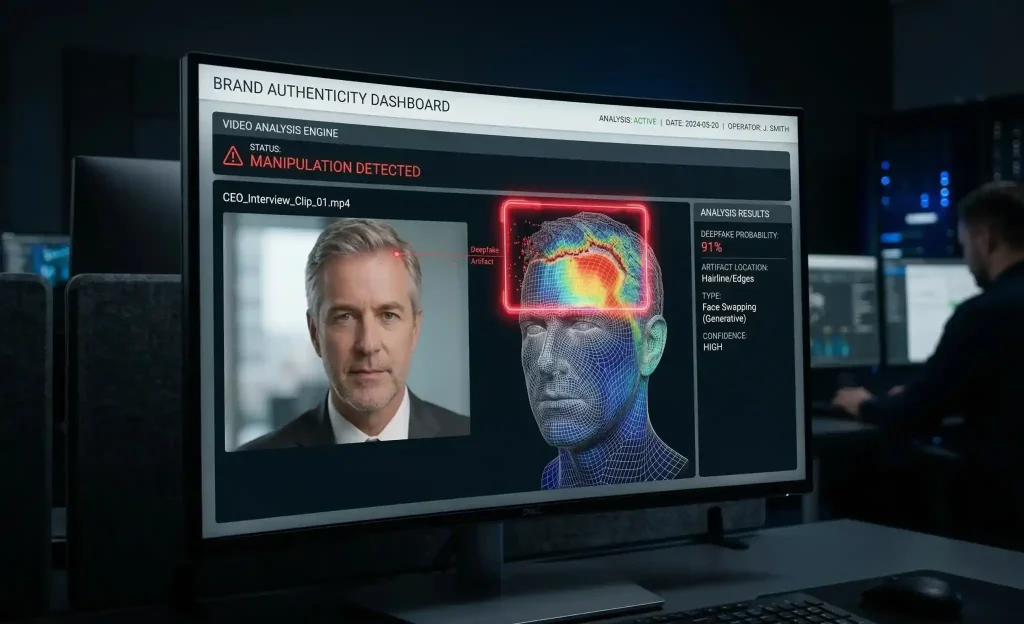

Spotting the Fake: How AI Detection Works

We’ve reached a point where the human eye isn’t enough to spot a fake. In 2026, synthetic videos have lost the “waxy” skin and weird blinking that used to give them away. To catch them, we have to use AI to fight AI.

We use multimodal analysis to scan content. This means we don’t just look at the face; we check the audio frequencies and the metadata at the same time. We look for “digital artifacts”—tiny errors in the code that happen when a face is mapped onto a body—that are invisible to a person but obvious to a detection engine. It’s about building a layer of trust back into your digital media.

Explore our AI/ML services for advanced detection and Generative AI security.

LLM Observability: Monitoring the “Brain” of the Attack

A deepfake doesn’t just appear; it is generated by a model. This is where LLM Observability comes in. By monitoring how AI models are being used, we can often spot the signs of an attack before the final video is even finished.

Think of it like a security camera for your AI systems. We look for “Agentic” behaviors—where an AI is being used to automatically generate thousands of variations of a fake video or voice clip. Our systems flag these anomalies in real-time, giving your security team a chance to shut down the threat or issue a public correction before the content goes viral. It’s about being proactive instead of just reacting to a PR disaster.

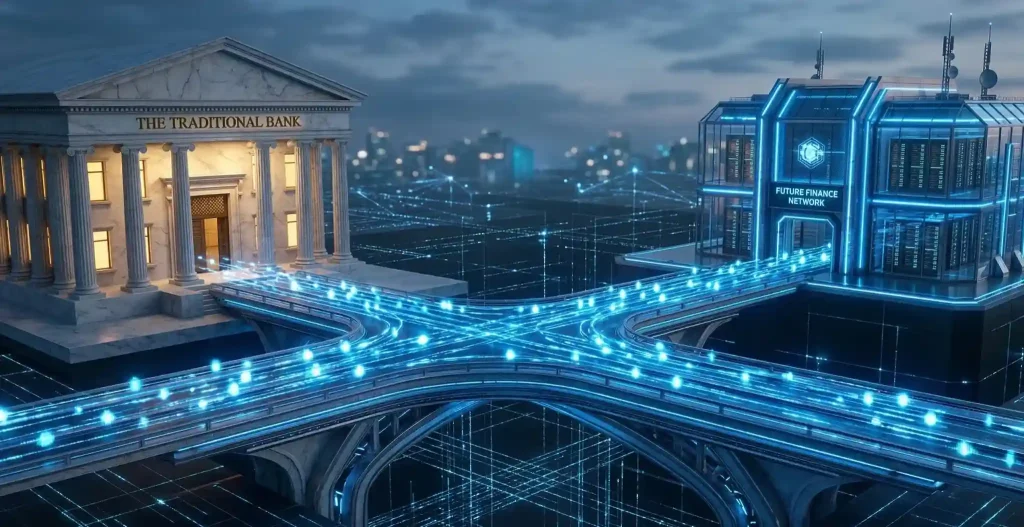

Cryptographic Proof: Digital Watermarking and C2PA

In 2026, we’ve moved past just “detecting” fakes; we are now “proving” what is real. The global standard for this is the C2PA (Coalition for Content Provenance and Authenticity). Think of it as a digital “nutrition label” that stays attached to your video or audio file no matter where it’s shared.

At Techelix, we integrate tools like Google SynthID directly into your content creation workflows. SynthID embeds an invisible, cryptographic watermark into every pixel of an image or every frame of a video.

Tamper-Proof History: If someone tries to edit a video of your spokesperson to change their words, the C2PA “Content Credential” will break, immediately flagging the video as “Tampered” on social media platforms.

Resilient Protection: These watermarks are designed to survive common changes like cropping, resizing, or even recording a screen with a phone camera.

Verification Portals: We build custom portals where your customers or employees can drag and drop a file to instantly verify if it came from your “Official Brand Vault”.

Explore our Generative AI services to see how we build secure, watermarked content pipelines.

Industry Focus: Pharmaceutical and Healthcare

Nowhere is the deepfake threat more dangerous than in the Pharmaceutical and Life Science industries. In 2026, we are seeing “Medical Phishing,” where deepfake audio is used to impersonate high-ranking doctors to request sensitive patient records or clinical trial data.

Because this industry is so heavily regulated, a single successful deepfake attack can lead to millions in fines under laws like HIPAA or the EU AI Act.

Secure Communications: We implement Zero-Trust architecture where every internal video call requires a secondary, out-of-band verification code sent to a secure mobile app.

Synthetic Content Audits: Our LLM Observability tools scan your internal communications for “AI-native malware”—automated bots that use deepfake voices to social-engineer your employees.

A 3-Step Roadmap to Secure Your Voice

Protecting your brand’s likeness is a journey, not a one-time fix. Here is how we get it done in 2026:

Map Your Vulnerabilities: We start by auditing your “Public Identity Footprint.” Which executives are most visible? Which communication channels (like Zoom or Teams) are used for high-value approvals?

Deploy Autonomous Defense: We integrate real-time AI detectors at your email and communication gateways. These tools act as a “security guard,” flagging any synthetic audio or video before it reaches your team.

Establish a “Source of Truth”: We move your official media assets into a secure cloud vault with mandatory C2PA watermarking. Every piece of content your brand releases will have a verifiable “digital birth certificate”.

Summary: Trust is Your Most Valuable Asset

In 2026, the brands that win are the ones people can actually believe. If your customers can’t tell the difference between your real CEO and a hacker’s puppet, your business is at risk.

Deepfake Defense isn’t just about cybersecurity; it’s about protecting the “Trust Equity” you’ve spent years building. By using a layered approach of AI Observability, Digital Watermarking, and Zero-Trust protocols, you can ensure that your brand’s voice remains yours and yours alone.

Ready to secure your brand’s future?

Talk to a Cybersecurity Expert at Techelix. Let’s build your deepfake defense roadmap today.

Build custom AI solutions that deliver real business value

From strategy to deployment, we help you design, develop, and scale AI-powered software that solves complex problems and drives measurable outcomes.